Limit gradient scale in chart to something reasonable instead of autoscaling to silly values

Completed

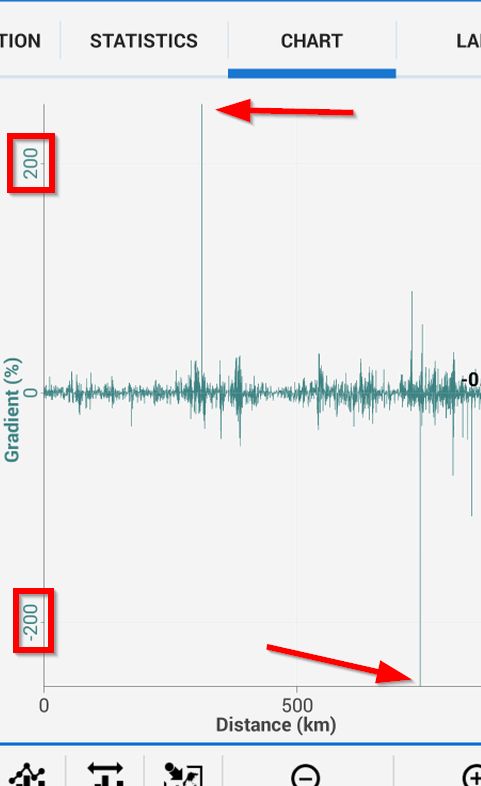

Does it make sense to autoscale the chart gradient to +/-200%? In my case (screen capture below) these are very nonsense values that Garmin Basecamp has calculated; I have no easy/ convenient control over them. Maybe limit/ clamp any outlying values to no more than +/-90%, or maybe even ignore these values? What does a value > 90 even physically mean? Maybe it could be an import setting? Only then autoscale the remainder of the values. Thank you.

I like this idea

I like this idea

Based on this idea http://help.locusmap.eu/topic/improve-change-of-altitude-track-style-mode , I created a lot stronger filter for gradient to eliminate huge jumps based on even a small change of altitude on really short distance.

You'll see in next version, but I believe it will be a lot better now.

Based on this idea http://help.locusmap.eu/topic/improve-change-of-altitude-track-style-mode , I created a lot stronger filter for gradient to eliminate huge jumps based on even a small change of altitude on really short distance.

You'll see in next version, but I believe it will be a lot better now.

Thank you - yes it looks a lot better now in 3.7.0.

Thank you - yes it looks a lot better now in 3.7.0.

Perfect, glad to hear it.

Perfect, glad to hear it.

I just created a new track with 3.13.0/ BRouter, and mainly with fast bike profile - see attached, all normal process, but there are 3 huge negative spikes in the gradient which make the chart unusable - gradient -400% - I don't think possible (!). I won't follow that route on my bike over the cliff. It would be an additional improvement to completely ignore these impossible values (as I originally suggested) before any filtering step.

PS. I had to add ".txt" to file to be accepted. Do you have any control over which file types are accepted? Surely a GPX file is a reasonable file to upload, and shouldn't need to resort to silly ".txt" trick to fool the website into uploading. Maybe confusing for other forum users too.

I just created a new track with 3.13.0/ BRouter, and mainly with fast bike profile - see attached, all normal process, but there are 3 huge negative spikes in the gradient which make the chart unusable - gradient -400% - I don't think possible (!). I won't follow that route on my bike over the cliff. It would be an additional improvement to completely ignore these impossible values (as I originally suggested) before any filtering step.

PS. I had to add ".txt" to file to be accepted. Do you have any control over which file types are accepted? Surely a GPX file is a reasonable file to upload, and shouldn't need to resort to silly ".txt" trick to fool the website into uploading. Maybe confusing for other forum users too.

There is perhaps possible, in case of longer trip stop with paused logging and drifting pressure ( easily equivalent to 20 alt meters per hour for barometric altitude) , that subsequent points at resumed logging create a sudden change in supposed altitude.

There is perhaps possible, in case of longer trip stop with paused logging and drifting pressure ( easily equivalent to 20 alt meters per hour for barometric altitude) , that subsequent points at resumed logging create a sudden change in supposed altitude.

Libor - I just checked the map - indeed the 0m gradient spike occurred at a location where I was off the bike for 10 minutes although I didn't pause track recording, so the pressure should have only varied very slightly in that time.

Libor - I just checked the map - indeed the 0m gradient spike occurred at a location where I was off the bike for 10 minutes although I didn't pause track recording, so the pressure should have only varied very slightly in that time.

Hmm this is very good point. And quite hard to solve I think. Any ideas? Compare by time needed to travel leg? Not ideal for some climbing activities :).

Hmm this is very good point. And quite hard to solve I think. Any ideas? Compare by time needed to travel leg? Not ideal for some climbing activities :).

Note that 20m/hour is typical value lasting many hours before a warm front. Before and after a cold front, the rate can be several times higher.

, Even if duration is much shorter.

Edit: what about to interrupt calculation of gradient at paused,autopaused, stationary like state? It could be used an Interpolation instead. It would eliminate such a jumps.

Note that 20m/hour is typical value lasting many hours before a warm front. Before and after a cold front, the rate can be several times higher.

, Even if duration is much shorter.

Edit: what about to interrupt calculation of gradient at paused,autopaused, stationary like state? It could be used an Interpolation instead. It would eliminate such a jumps.

Yet another example of invalid elevation measurement attached. New Samsung phone, nothing extraordinary. See below the second track point dips by 32 metres. The 3rd & subsequent track point elevations stabilize around correct ~213m. I don't see any chart heavy filtering - the gradient is displayed as -60%. Backstory to this track record: exit house, turn on phone, turn on Locus (settings: auto track record, GPS enabled at start), tap Satellite icon-button, wait for GPS to lock (~20s), ride, enjoy.

Yet another example of invalid elevation measurement attached. New Samsung phone, nothing extraordinary. See below the second track point dips by 32 metres. The 3rd & subsequent track point elevations stabilize around correct ~213m. I don't see any chart heavy filtering - the gradient is displayed as -60%. Backstory to this track record: exit house, turn on phone, turn on Locus (settings: auto track record, GPS enabled at start), tap Satellite icon-button, wait for GPS to lock (~20s), ride, enjoy.

I'm not convinced this topic is completed. No comment from @menion on latest example? I don't see evidence of weighted average of nearest 10 points.

@menion "The chart is already heavy filtered. My algorithm is based on making the weighted average from before and after 250 meters (or nearest 10 points)"

@menion 3 years ago "I absolutely agree that such value is total nonsense for bike ride."

What if filter took into account the activity profile?

I'm not convinced this topic is completed. No comment from @menion on latest example? I don't see evidence of weighted average of nearest 10 points.

@menion "The chart is already heavy filtered. My algorithm is based on making the weighted average from before and after 250 meters (or nearest 10 points)"

@menion 3 years ago "I absolutely agree that such value is total nonsense for bike ride."

What if filter took into account the activity profile?

@Menion ,

Do you filter the altitude points on the time scale, or the resulting gradient ? As time derivatives of noisy signals are much more noisy, it would make sense to heavily filter the gradient, instead of heavy filtering the altitude, that could damage real values.

@Menion ,

Do you filter the altitude points on the time scale, or the resulting gradient ? As time derivatives of noisy signals are much more noisy, it would make sense to heavily filter the gradient, instead of heavy filtering the altitude, that could damage real values.

I filter both values. Little crazy is that altitude values are filtered and these values (filtered) are then used for computing of gradient.

Thanks Andrew for the file. All values look good except the start of track, as you wrote. I noticed the same issue on my device as well. It is generally not a problem of strength of the current filter. GPS just sometimes needs more time till it stabilizes. Interesting is that Locus Map currently ignores first 10 locations from GPS marked as "fixed" when you enable track recording. 11th location is the first recorded, so this should eliminate worst cases with invalid horizontal/vertical location values. Seems, still not enough sometimes.

So the solution?

Hmm my idea: analyze track right after track recording stop, compare with elevation values from HGT files and if they will match (means user moved on the surface), find and fix just these huge peaks based on HGT values? Most probably fix just values at the start of the track or after the pause in recording.

I'm sure, that Libor will have some really complicated sophisticated "signal-filtering" system for me. If so, maybe pointing me on some useful texts "how to solve similar use-cases" may help. Thanks

I filter both values. Little crazy is that altitude values are filtered and these values (filtered) are then used for computing of gradient.

Thanks Andrew for the file. All values look good except the start of track, as you wrote. I noticed the same issue on my device as well. It is generally not a problem of strength of the current filter. GPS just sometimes needs more time till it stabilizes. Interesting is that Locus Map currently ignores first 10 locations from GPS marked as "fixed" when you enable track recording. 11th location is the first recorded, so this should eliminate worst cases with invalid horizontal/vertical location values. Seems, still not enough sometimes.

So the solution?

Hmm my idea: analyze track right after track recording stop, compare with elevation values from HGT files and if they will match (means user moved on the surface), find and fix just these huge peaks based on HGT values? Most probably fix just values at the start of the track or after the pause in recording.

I'm sure, that Libor will have some really complicated sophisticated "signal-filtering" system for me. If so, maybe pointing me on some useful texts "how to solve similar use-cases" may help. Thanks

Replies have been locked on this page!